AI-powered TDS reconciliation for a compliance SaaS workflow.

The product surfaced differences across Form 26AS, AIS, internal TDS deduction records, books, and return preparation data, but users still had to investigate every row manually. The hard work was not spotting a difference; it was finding the likely reason, deciding what to do next, and explaining the issue clearly to clients or deductors.

Turn static reconciliation reports into prioritized, explainable, action-ready workflows: classify mismatches, show confidence, recommend corrective action, generate communication drafts, and preserve authorized user approval before anything leaves the system.

What shipped

Move from flat reports to an action queue

The system reads Form 26AS, AIS, internal deduction records, books data, and linked return records, then groups mismatches by the work they require. Timing differences, filing delays, amount variances, duplicate entries, missing credits, internal books mismatches, and low-confidence exceptions stop looking like one long spreadsheet and become a prioritized review queue.

Explain the likely reason, not just the delta

The mismatch agent compares deductor name, TAN, PAN, section, amount, date, quarter, challan, and transaction context. Each exception gets a reason label and confidence score so routine items can move quickly while high-value or uncertain cases go to a senior reviewer.

Draft the next move, then wait for approval

For each group, the agent recommends what should happen next: contact the deductor, wait for a revised statement, update internal books, compare source values, or escalate. It can also generate client-ready summaries and follow-up drafts, but the authorized user reviews, edits, and approves the final action.

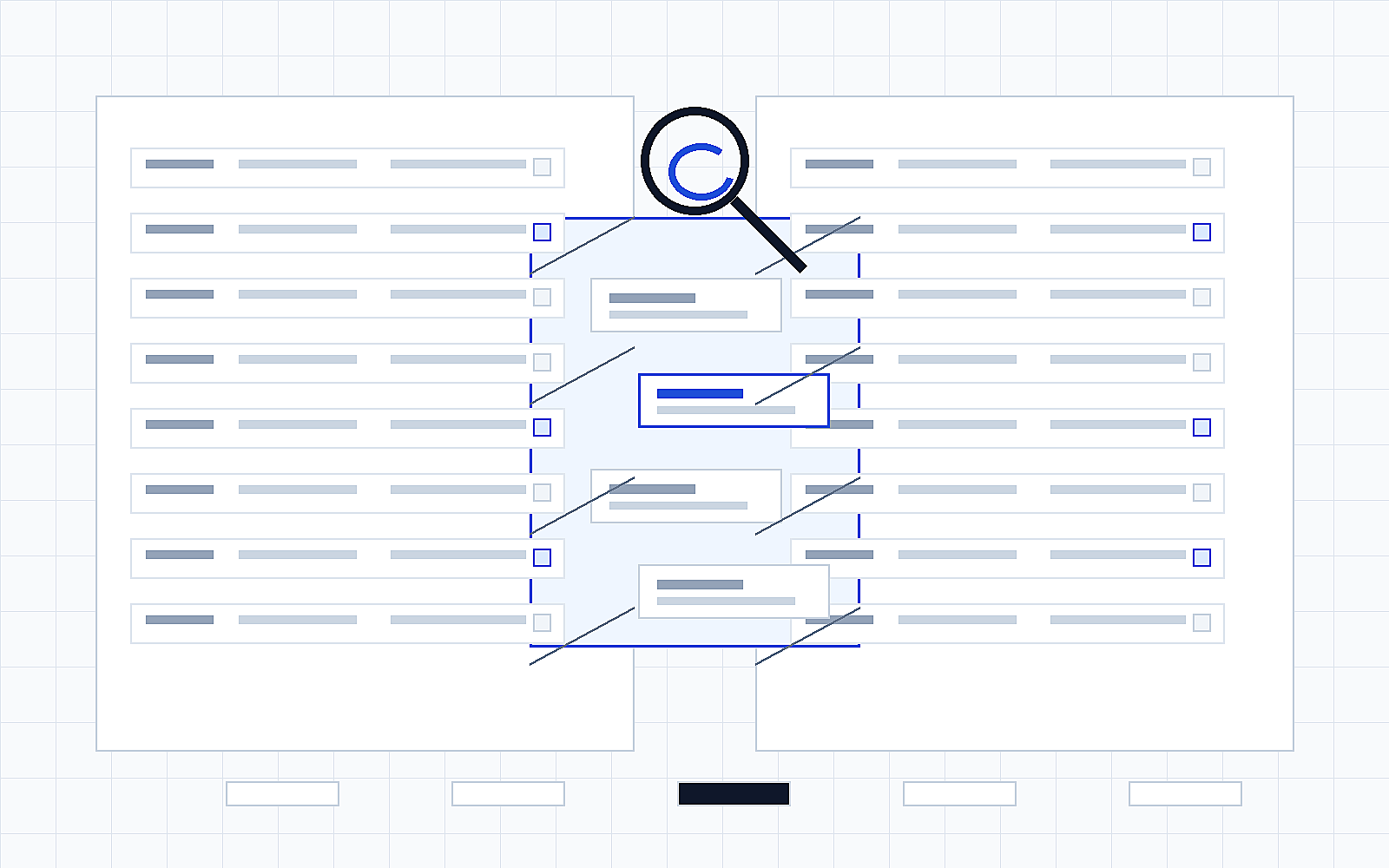

What it looked like in action

Representative mockup using anonymized sample data. The interaction patterns reflect the production flows; names, amounts, IDs, and dates are illustrative.

Business outcomes

Technical capabilities demonstrated

The systems and controls behind the story above.

Multi-source reconciliation engine

Records are compared across Form 26AS, AIS, internal TDS deduction records, books, and return data using deductor, TAN, PAN, section, quarter, challan, date, amount, and transaction context.

Root-cause classification with confidence scoring

The AI layer labels mismatches as timing differences, filing delays, amount variances, duplicates, missing credits, internal books mismatches, or low-confidence exceptions.

Exception workflow orchestration

Routine mismatches are grouped and prioritized; low-confidence or material exceptions are routed for senior review before user-facing output is finalized.

Source-linked communication generation

Client summaries and deductor follow-up drafts are generated with source references so users can verify the evidence before sending.

Audit-ready approval trail

Suggestions, user edits, approvals, exports, and final actions are logged against the source records and model output used to generate them.

Role-aware compliance controls

Access, retention, and taxpayer-data handling align with the SaaS product permissions already used by tax professionals, finance teams, and compliance users.

Architecture

Compliance source model

Government-reported records, internal deductions, books data, and return preparation context are normalized into a comparison-ready source model with amount, deductor, taxpayer, quarter, challan, and date-level detail.

Mismatch reasoning agent

The agent classifies each exception by likely cause, attaches confidence, groups related rows, and turns raw differences into a review-ready action list for tax professionals and finance teams.

User approval and communication controls

Draft summaries and follow-up notes stay editable and source-linked. Suggestions, edits, approvals, and exports are logged so the workflow remains auditable.

Assistant, not decision-maker

The AI classifies, explains, recommends, and drafts; authorized users decide the final reconciliation position and communication.

Source references

Generated explanations and drafts link back to the Form 26AS, AIS, books, or return records used for the suggestion.

Confidence-led review

Low-confidence, high-value, or unusual exceptions are routed for senior review instead of being treated as routine.

Taxpayer-data controls

Role-based access, retention rules, and audit logs are preserved around sensitive compliance records.